Texture mapping[1][2][3] is a method for mapping a texture on a computer-generated graphic. "Texture" in this context can be high frequency detail, surface texture, or color.

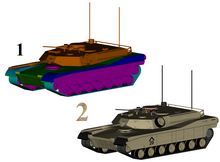

2: Same model with textures

History

editThe original technique was pioneered by Edwin Catmull in 1974 as part of his doctoral thesis.[4]

Texture mapping originally referred to diffuse mapping, a method that simply mapped pixels from a texture to a 3D surface ("wrapping" the image around the object). In recent decades, the advent of multi-pass rendering, multitexturing, mipmaps, and more complex mappings such as height mapping, bump mapping, normal mapping, displacement mapping, reflection mapping, specular mapping, occlusion mapping, and many other variations on the technique (controlled by a materials system) have made it possible to simulate near-photorealism in real time by vastly reducing the number of polygons and lighting calculations needed to construct a realistic and functional 3D scene.

1: Untextured sphere, 2: Texture and bump maps, 3: Texture map only, 4: Opacity and texture maps

Texture maps

editA texture map[5][6] is an image applied (mapped) to the surface of a shape or polygon.[7] This may be a bitmap image or a procedural texture. They may be stored in common image file formats, referenced by 3D model formats or material definitions, and assembled into resource bundles.

They may have one to three dimensions, although two dimensions are most common for visible surfaces. For use with modern hardware, texture map data may be stored in swizzled or tiled orderings to improve cache coherency. Rendering APIs typically manage texture map resources (which may be located in device memory) as buffers or surfaces, and may allow 'render to texture' for additional effects such as post processing or environment mapping.

They usually contain RGB color data (either stored as direct color, compressed formats, or indexed color), and sometimes an additional channel for alpha blending (RGBA) especially for billboards and decal overlay textures. It is possible to use the alpha channel (which may be convenient to store in formats parsed by hardware) for other uses such as specularity.

Multiple texture maps (or channels) may be combined for control over specularity, normals, displacement, or subsurface scattering e.g. for skin rendering.

Multiple texture images may be combined in texture atlases or array textures to reduce state changes for modern hardware. (They may be considered a modern evolution of tile map graphics). Modern hardware often supports cube map textures with multiple faces for environment mapping.

Creation

editTexture maps may be acquired by scanning/digital photography, designed in image manipulation software such as GIMP, Photoshop, or painted onto 3D surfaces directly in a 3D paint tool such as Mudbox or ZBrush.

Texture application

editThis process is akin to applying patterned paper to a plain white box. Every vertex in a polygon is assigned a texture coordinate (which in the 2d case is also known as UV coordinates).[8] This may be done through explicit assignment of vertex attributes, manually edited in a 3D modelling package through UV unwrapping tools. It is also possible to associate a procedural transformation from 3D space to texture space with the material. This might be accomplished via planar projection or, alternatively, cylindrical or spherical mapping. More complex mappings may consider the distance along a surface to minimize distortion. These coordinates are interpolated across the faces of polygons to sample the texture map during rendering. Textures may be repeated or mirrored to extend a finite rectangular bitmap over a larger area, or they may have a one-to-one unique "injective" mapping from every piece of a surface (which is important for render mapping and light mapping, also known as baking).

Texture space

editTexture mapping maps the model surface (or screen space during rasterization) into texture space; in this space, the texture map is visible in its undistorted form. UV unwrapping tools typically provide a view in texture space for manual editing of texture coordinates. Some rendering techniques such as subsurface scattering may be performed approximately by texture-space operations.

Multitexturing

editMultitexturing is the use of more than one texture at a time on a polygon.[9] For instance, a light map texture may be used to light a surface as an alternative to recalculating that lighting every time the surface is rendered. Microtextures or detail textures are used to add higher frequency details, and dirt maps may add weathering and variation; this can greatly reduce the apparent periodicity of repeating textures. Modern graphics may use more than 10 layers, which are combined using shaders, for greater fidelity. Another multitexture technique is bump mapping, which allows a texture to directly control the facing direction of a surface for the purposes of its lighting calculations; it can give a very good appearance of a complex surface (such as tree bark or rough concrete) that takes on lighting detail in addition to the usual detailed coloring. Bump mapping has become popular in recent video games, as graphics hardware has become powerful enough to accommodate it in real-time.[10]

Texture filtering

editThe way that samples (e.g. when viewed as pixels on the screen) are calculated from the texels (texture pixels) is governed by texture filtering. The cheapest method is to use the nearest-neighbour interpolation, but bilinear interpolation or trilinear interpolation between mipmaps are two commonly used alternatives which reduce aliasing or jaggies. In the event of a texture coordinate being outside the texture, it is either clamped or wrapped. Anisotropic filtering better eliminates directional artefacts when viewing textures from oblique viewing angles.

Texture streaming

editTexture streaming is a means of using data streams for textures, where each texture is available in two or more different resolutions, as to determine which texture should be loaded into memory and used based on draw distance from the viewer and how much memory is available for textures. Texture streaming allows a rendering engine to use low resolution textures for objects far away from the viewer's camera, and resolve those into more detailed textures, read from a data source, as the point of view nears the objects.

Baking

editAs an optimization, it is possible to render detail from a complex, high-resolution model or expensive process (such as global illumination) into a surface texture (possibly on a low-resolution model). Baking is also known as render mapping. This technique is most commonly used for light maps, but may also be used to generate normal maps and displacement maps. Some computer games (e.g. Messiah) have used this technique. The original Quake software engine used on-the-fly baking to combine light maps and colour maps ("surface caching").

Baking can be used as a form of level of detail generation, where a complex scene with many different elements and materials may be approximated by a single element with a single texture, which is then algorithmically reduced for lower rendering cost and fewer drawcalls. It is also used to take high-detail models from 3D sculpting software and point cloud scanning and approximate them with meshes more suitable for realtime rendering.

Rasterisation algorithms

editVarious techniques have evolved in software and hardware implementations. Each offers different trade-offs in precision, versatility and performance.

Affine texture mapping

editAffine texture mapping linearly interpolates texture coordinates across a surface, and so is the fastest form of texture mapping. Some software and hardware (such as the original PlayStation) project vertices in 3D space onto the screen during rendering and linearly interpolate the texture coordinates in screen space between them. This may be done by incrementing fixed point UV coordinates, or by an incremental error algorithm akin to Bresenham's line algorithm.

In contrast to perpendicular polygons, this leads to noticeable distortion with perspective transformations (see figure: the checker box texture appears bent), especially as primitives near the camera. Such distortion may be reduced with the subdivision of the polygon into smaller ones.

For the case of rectangular objects, using quad primitives can look less incorrect than the same rectangle split into triangles, but because interpolating 4 points adds complexity to the rasterization, most early implementations preferred triangles only. Some hardware, such as the forward texture mapping used by the Nvidia NV1, was able to offer efficient quad primitives. With perspective correction (see below) triangles become equivalent and this advantage disappears.

For rectangular objects that are at right angles to the viewer, like floors and walls, the perspective only needs to be corrected in one direction across the screen, rather than both. The correct perspective mapping can be calculated at the left and right edges of the floor, and then an affine linear interpolation across that horizontal span will look correct, because every pixel along that line is the same distance from the viewer.

Perspective correctness

editPerspective correct texturing accounts for the vertices' positions in 3D space, rather than simply interpolating coordinates in 2D screen space.[11] This achieves the correct visual effect but it is more expensive to calculate.[11]

To perform perspective correction of the texture coordinates and , with being the depth component from the viewer's point of view, we can take advantage of the fact that the values , , and are linear in screen space across the surface being textured. In contrast, the original , and , before the division, are not linear across the surface in screen space. We can therefore linearly interpolate these reciprocals across the surface, computing corrected values at each pixel, to result in a perspective correct texture mapping.

To do this, we first calculate the reciprocals at each vertex of our geometry (3 points for a triangle). For vertex we have . Then, we linearly interpolate these reciprocals between the vertices (e.g., using barycentric coordinates), resulting in interpolated values across the surface. At a given point, this yields the interpolated , and . Note that this cannot be yet used as our texture coordinates as our division by altered their coordinate system.

To correct back to the space we first calculate the corrected by again taking the reciprocal . Then we use this to correct our : and .[12]

This correction makes it so that in parts of the polygon that are closer to the viewer the difference from pixel to pixel between texture coordinates is smaller (stretching the texture wider) and in parts that are farther away this difference is larger (compressing the texture).

- Affine texture mapping directly interpolates a texture coordinate between two endpoints and :

- where

- Perspective correct mapping interpolates after dividing by depth , then uses its interpolated reciprocal to recover the correct coordinate:

3D graphics hardware typically supports perspective correct texturing.

Various techniques have evolved for rendering texture mapped geometry into images with different quality/precision tradeoffs, which can be applied to both software and hardware.

Classic software texture mappers generally did only simple mapping with at most one lighting effect (typically applied through a lookup table), and the perspective correctness was about 16 times more expensive.

Restricted camera rotation

editThe Doom engine restricted the world to vertical walls and horizontal floors/ceilings, with a camera that could only rotate about the vertical axis. This meant the walls would be a constant depth coordinate along a vertical line and the floors/ceilings would have a constant depth along a horizontal line. After performing one perspective correction calculation for the depth, the rest of the line could use fast affine mapping. Some later renderers of this era simulated a small amount of camera pitch with shearing which allowed the appearance of greater freedom whilst using the same rendering technique.

Some engines were able to render texture mapped Heightmaps (e.g. Nova Logic's Voxel Space, and the engine for Outcast) via Bresenham-like incremental algorithms, producing the appearance of a texture mapped landscape without the use of traditional geometric primitives.[13]

Subdivision for perspective correction

editEvery triangle can be further subdivided into groups of about 16 pixels in order to achieve two goals. First, keeping the arithmetic mill busy at all times. Second, producing faster arithmetic results.[vague]

World space subdivision

editFor perspective texture mapping without hardware support, a triangle is broken down into smaller triangles for rendering and affine mapping is used on them. The reason this technique works is that the distortion of affine mapping becomes much less noticeable on smaller polygons. The Sony PlayStation made extensive use of this because it only supported affine mapping in hardware but had a relatively high triangle throughput compared to its peers.

Screen space subdivision

editSoftware renderers generally preferred screen subdivision because it has less overhead. Additionally, they try to do linear interpolation along a line of pixels to simplify the set-up (compared to 2d affine interpolation) and thus again the overhead (also affine texture-mapping does not fit into the low number of registers of the x86 CPU; the 68000 or any RISC is much more suited).

A different approach was taken for Quake, which would calculate perspective correct coordinates only once every 16 pixels of a scanline and linearly interpolate between them, effectively running at the speed of linear interpolation because the perspective correct calculation runs in parallel on the co-processor.[14] The polygons are rendered independently, hence it may be possible to switch between spans and columns or diagonal directions depending on the orientation of the polygon normal to achieve a more constant z but the effort seems not to be worth it.

Other techniques

editAnother technique was approximating the perspective with a faster calculation, such as a polynomial. Still another technique uses 1/z value of the last two drawn pixels to linearly extrapolate the next value. The division is then done starting from those values so that only a small remainder has to be divided[15] but the amount of bookkeeping makes this method too slow on most systems.

Finally, the Build engine extended the constant distance trick used for Doom by finding the line of constant distance for arbitrary polygons and rendering along it.

Hardware implementations

editTexture mapping hardware was originally developed for simulation (e.g. as implemented in the Evans and Sutherland ESIG and Singer-Link Digital Image Generators DIG), and professional graphics workstations such as Silicon Graphics, broadcast digital video effects machines such as the Ampex ADO and later appeared in Arcade cabinets, consumer video game consoles, and PC video cards in the mid-1990s. In flight simulation, texture mapping provided important motion and altitude cues necessary for pilot training not available on untextured surfaces. It was also in flight simulation applications, that texture mapping was implemented for real-time processing with prefiltered texture patterns stored in memory for real-time access by the video processor.[16]

Modern graphics processing units (GPUs) provide specialised fixed function units called texture samplers, or texture mapping units, to perform texture mapping, usually with trilinear filtering or better multi-tap anisotropic filtering and hardware for decoding specific formats such as DXTn. As of 2016, texture mapping hardware is ubiquitous as most SOCs contain a suitable GPU.

Some hardware combines texture mapping with hidden-surface determination in tile based deferred rendering or scanline rendering; such systems only fetch the visible texels at the expense of using greater workspace for transformed vertices. Most systems have settled on the Z-buffering approach, which can still reduce the texture mapping workload with front-to-back sorting.

Among earlier graphics hardware, there were two competing paradigms of how to deliver a texture to the screen:

- Forward texture mapping iterates through each texel on the texture, and decides where to place it on the screen.

- Inverse texture mapping instead iterates through pixels on the screen, and decides what texel to use for each.

Inverse texture mapping is the method which has become standard in modern hardware.

Inverse texture mapping

editWith this method, a pixel on the screen is mapped to a point on the texture. Each vertex of a rendering primitive is projected to a point on the screen, and each of these points is mapped to a u,v texel coordinate on the texture. A rasterizer will interpolate between these points to fill in each pixel covered by the primitive.

The primary advantage is that each pixel covered by a primitive will be traversed exactly once. Once a primitive's vertices are transformed, the amount of remaining work scales directly with how many pixels it covers on the screen.

The main disadvantage versus forward texture mapping is that the memory access pattern in the texture space will not be linear if the texture is at an angle to the screen. This disadvantage is often addressed by texture caching techniques, such as the swizzled texture memory arrangement.

The linear interpolation can be used directly for simple and efficient affine texture mapping, but can also be adapted for perspective correctness.

Forward texture mapping

editForward texture mapping maps each texel of the texture to a pixel on the screen. After transforming a rectangular primitive to a place on the screen, a forward texture mapping renderer iterates through each texel on the texture, splatting each one onto a pixel of the frame buffer.

This was used by some hardware, such as the 3DO, the Sega Saturn and the NV1.

The primary advantage is that the texture will be accessed in a simple linear order, allowing very efficient caching of the texture data. However, this benefit is also its disadvantage: as a primitive gets smaller on screen, it still has to iterate over every texel in the texture, causing many pixels to be overdrawn redundantly.

This method is also well suited for rendering quad primitives rather than reducing them to triangles, which provided an advantage when perspective correct texturing was not available in hardware. This is because the affine distortion of a quad looks less incorrect than the same quad split into two triangles (see affine texture mapping above). The NV1 hardware also allowed a quadratic interpolation mode to provide an even better approximation of perspective correctness.

The existing hardware implementations did not provide effective UV coordinate mapping, which became an important technique for 3D modelling and assisted in clipping the texture correctly when the primitive goes over the edge of the screen. These shortcomings could have been addressed with further development, but GPU design has since mostly moved toward inverse mapping.

Applications

editBeyond 3D rendering, the availability of texture mapping hardware has inspired its use for accelerating other tasks:

Tomography

editIt is possible to use texture mapping hardware to accelerate both the reconstruction of voxel data sets from tomographic scans, and to visualize the results.[17]

User interfaces

editMany user interfaces use texture mapping to accelerate animated transitions of screen elements, e.g. Exposé in Mac OS X.

See also

edit- 2.5D

- 3D computer graphics

- Mipmap

- Materials system

- Parametrization

- Texture synthesis

- Texture atlas

- Texture splatting – a technique for combining textures

- Shader (computer graphics)

References

edit- ^ Wang, Huamin. "Texture Mapping" (PDF). department of Computer Science and Engineering. Ohio State University. Archived from the original (PDF) on 2016-03-04. Retrieved 2016-01-15.

- ^ "Texture Mapping" (PDF). www.inf.pucrs.br. Retrieved September 15, 2019.

- ^ "CS 405 Texture Mapping". www.cs.uregina.ca. Retrieved 22 March 2018.

- ^ Catmull, E. (1974). A subdivision algorithm for computer display of curved surfaces (PDF) (PhD thesis). University of Utah.

- ^ Fosner, Ron (January 1999). "DirectX 6.0 Goes Ballistic With Multiple New Features And Much Faster Code". Microsoft.com. Archived from the original on October 31, 2016. Retrieved September 15, 2019.

- ^ Hvidsten, Mike (Spring 2004). "The OpenGL Texture Mapping Guide". homepages.gac.edu. Archived from the original on 23 May 2019. Retrieved 22 March 2018.

- ^ Jon Radoff, Anatomy of an MMORPG, "Anatomy of an MMORPG". radoff.com. August 22, 2008. Archived from the original on 2009-12-13. Retrieved 2009-12-13.

- ^ Roberts, Susan. "How to use textures". Archived from the original on 24 September 2021. Retrieved 20 March 2021.

{{cite web}}: CS1 maint: unfit URL (link) - ^ Blythe, David. Advanced Graphics Programming Techniques Using OpenGL. Siggraph 1999. (PDF) (see: Multitexture)

- ^ Real-Time Bump Map Synthesis, Jan Kautz1, Wolfgang Heidrichy2 and Hans-Peter Seidel1, (1Max-Planck-Institut für Informatik, 2University of British Columbia)

- ^ a b "The Next Generation 1996 Lexicon A to Z: Perspective Correction". Next Generation. No. 15. Imagine Media. March 1996. p. 38.

- ^ Kalms, Mikael (1997). "Perspective Texturemapping". www.lysator.liu.se. Retrieved 2020-03-27.

- ^ "Voxel terrain engine", introduction. In a coder's mind, 2005 (archived 2013).

- ^ Abrash, Michael. Michael Abrash's Graphics Programming Black Book Special Edition. The Coriolis Group, Scottsdale Arizona, 1997. ISBN 1-57610-174-6 (PDF Archived 2007-03-11 at the Wayback Machine) (Chapter 70, pg. 1282)

- ^ US 5739818, Spackman, John Neil, "Apparatus and method for performing perspectively correct interpolation in computer graphics", issued 1998-04-14

- ^ Yan, Johnson (August 1985). "Advances in Computer-Generated Imagery for Flight Simulation". IEEE. 5 (8): 37–51. doi:10.1109/MCG.1985.276213.

{{cite journal}}: External link in|ref= - ^ "texture mapping for tomography".

Software

edit- TexRecon Archived 2021-11-27 at the Wayback Machine — open-source software for texturing 3D models written in C++

External links

edit- Introduction into texture mapping using C and SDL (PDF)

- Programming a textured terrain using XNA/DirectX, from www.riemers.net

- Perspective correct texturing

- Time Texturing Texture mapping with bezier lines

- Polynomial Texture Mapping Archived 2019-03-07 at the Wayback Machine Interactive Relighting for Photos

- 3 Métodos de interpolación a partir de puntos (in spanish) Methods that can be used to interpolate a texture knowing the texture coords at the vertices of a polygon

- 3D Texturing Tools